The AI Reckoning: What the Evidence Actually Says, and What We Should Do About It

A Moment of Honest Reflection

I have been finding myself in a growing number of conversations about the ethical and environmental side of artificial intelligence. Somewhere in the middle of one of those conversations recently, I had an uncomfortable realisation: I was floundering. I was leaning on commonsense observations, broadly held assumptions, and the kind of anecdotal reasoning that sounds persuasive in the room but does not hold up under scrutiny. That did not sit comfortably with me.

So I did something about it. I put in some hard yards doing proper research. That process started, somewhat inevitably, with AI-assisted research tools, but I was deliberate about how I used them. I specifically prompted for breadth rather than confirmation, asked for counter-views, and made a point of verifying sources against peer-reviewed articles and credible institutional reports. I then loaded the material into Google NotebookLM to build a structured foundation before going deeper. And go deeper I did: several full articles read in their entirety, hours of substantive content consumed. I had not planned to go that far. But each layer I peeled away made me more concerned, and that concern made it feel more important to get this right. Intellectual honesty has a momentum of its own once you let it start moving.

The moment that crystallised everything came from an unexpected direction. I had asked NotebookLM to produce an audio summary of the research, specifically framed for “presenting at a team meeting”, something accessible that would help a non-specialist audience engage with the material without being overwhelmed. The summary did its job. It covered the key findings clearly and progressively. But as it moved toward its conclusion, the tone shifted. The predictions about exponential growth in power and water consumption, if current trajectories continue unchecked, became increasingly stark. And then, at the very end, the AI-generated voice wrapped up with what could only be described as a sarcastic sign-off: ‘Good luck at your next team meeting.’

I laughed. And then I sat with it for a while. Because buried in that throwaway line was something pointed. The research, synthesised and reflected back through an AI tool, had essentially arrived at the same place I had: the scale of what is coming is genuinely difficult to communicate in a setting designed for forty-five-minute agendas and action points. The gap between the evidence and the average organisational conversation about AI is significant.

This article is my attempt to help close that gap, with honesty about the challenges and equal honesty about the reasons for genuine, evidence-based optimism.

Warning. This is a long read.

I went down a rabbit hole!

I want to be clear about my own position going into this. I am not an AI sceptic. I use these tools daily, I help leaders think through how to adopt them, and I believe the productivity and creative potential they unlock is real. But I am increasingly convinced that enthusiasm without literacy is a liability, and that the most valuable thing I can do, both in my coaching work and in writing like this, is model what it looks like to engage with AI seriously rather than selectively.

What the Evidence Actually Shows

The Ethical Dimension

The most persistent myth in AI adoption is that these systems are neutral because they are mathematical. The reality, documented across sources including the USC Annenberg analysis of AI’s ethical dilemmas, comparative framework research across IEEE, EU, and OECD guidelines, and the Stanford HAI AI Index Report, is that AI systems inherit the biases of their training data, and that data reflects the inequalities of the world that produced it.

The consequences are not theoretical. In hiring, AI screening tools have systematically disadvantaged applications from women in technical roles. In lending, algorithmic credit-scoring has consistently produced worse outcomes for applicants from minority communities. In facial recognition, error rates for darker-skinned individuals run dramatically higher than for lighter-skinned ones, a disparity with serious implications when such systems inform identification decisions. These are documented patterns across multiple sectors and geographies, not edge cases.

This matters to me personally, not just academically. One of the core arguments I make in my work is that leadership is fundamentally about how power is exercised and who it serves. When we uncritically adopt AI systems that replicate and scale existing power imbalances, we are not being neutral. We are making a choice, and we should own that choice rather than hiding behind the word ‘technology’.

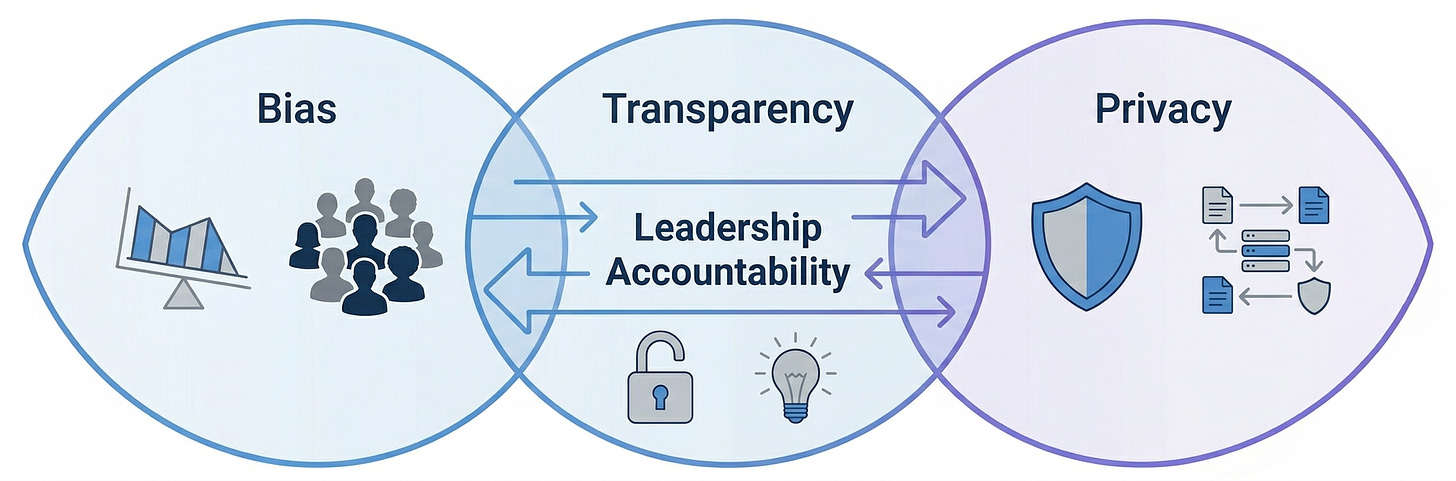

Alongside bias sits the problem of transparency. The most capable AI models tend to be the least interpretable. They produce outputs through processes that even their creators cannot fully explain, which creates a fundamental accountability problem when those outputs shape consequential decisions about health, finance, employment, or legal status. The EU’s AI Act and IEEE’s ethical framework both identify explicability as a core requirement for trustworthy AI, yet the tension between interpretability and performance remains live. Organisations are making real decisions in that gap, often without acknowledging that the gap exists.

Privacy sits at the third corner of this ethical triangle. AI’s requirement for data at scale means that personalisation and surveillance are, architecturally, very similar things. The Stanford HAI report notes that as AI capabilities expand, so does the surface area for manipulation and profiling, and that the individuals most affected are typically those with the least power to resist it. The T20 India policy brief on environmental and ethical AI challenges flags accountability as a governance gap of genuine urgency, arguing that liability frameworks need extending to cover AI-mediated harms with the same rigour applied to any other product or service.

What runs through all of these concerns is a structural observation drawn from the broader AI ethics scholarship: bias in AI is not a technical bug waiting for a patch. It is a structural problem rooted in who builds these systems, whose data is used, and whose interests are centred in the design process. Fixing it requires institutional change, not just algorithmic adjustment. That is harder, slower, and less comfortable than a software update. It is also the only approach that will actually work.

There is, however, real progress to note. The AIHub review of top AI ethics and policy issues from 2025 reports that AI safety infrastructure experienced rapid and meaningful growth last year. Safety, once discussed primarily in conceptual terms, has evolved into a structured engineering discipline, with independent third-party auditing processes and evaluation centres emerging as a genuine checks-and-balances layer. Leading AI laboratories including OpenAI, Google, Anthropic, and Alibaba adopted standardised benchmarks for assessing deception, persuasion, and planning. The UK proposed its AI Growth Lab, a sandbox for testing new models under real-world conditions. These are meaningful structural improvements, and they matter.

The Environmental Dimension

If the ethical landscape is complicated, the environmental picture is in some respects more concrete, and as a result more difficult to sit with comfortably. The research is increasingly robust, even if the data disclosed voluntarily by AI companies remains frustratingly incomplete.

Training a single large AI model can emit hundreds of metric tons of CO2. The Cornell University roadmap on the environmental impact of AI data centres, published in late 2025, projects annual carbon emissions from AI infrastructure reaching 24 to 44 million metric tons by 2030. The Oeko Institut’s detailed study adds important nuance: inference, the ongoing act of running deployed models to generate outputs, now accounts for 80 to 90 per cent of AI’s operational energy use. Training happens once. Inference happens billions of times daily. The energy cost does not front-load and then plateau; it scales continuously with adoption.

Water consumption compounds this picture. Data centres require enormous volumes of water for cooling, and the siting of large AI infrastructure in regions already experiencing water stress creates compounding scarcity problems. The International Science Council’s report and the ArXiv paper on AI’s ecological impact both highlight that the hardware running these systems has a short operational lifespan relative to its manufacturing and materials cost, generating an e-waste problem that is rarely factored into AI’s environmental accounting.

The civil society report from February 2026 challenges one of the most commonly used rhetorical escape hatches for AI companies facing environmental scrutiny: the argument that AI’s footprint is justified, or offset, by its potential to help solve the climate crisis. This is not an entirely false argument. The LSE Grantham Institute’s study found that AI applied at scale in energy and transport could reduce global emissions by 3.2 to 5.4 billion tonnes annually, a figure large enough to take seriously. But potential future benefits do not cancel out present costs, and the civil society report is right to insist on that distinction.

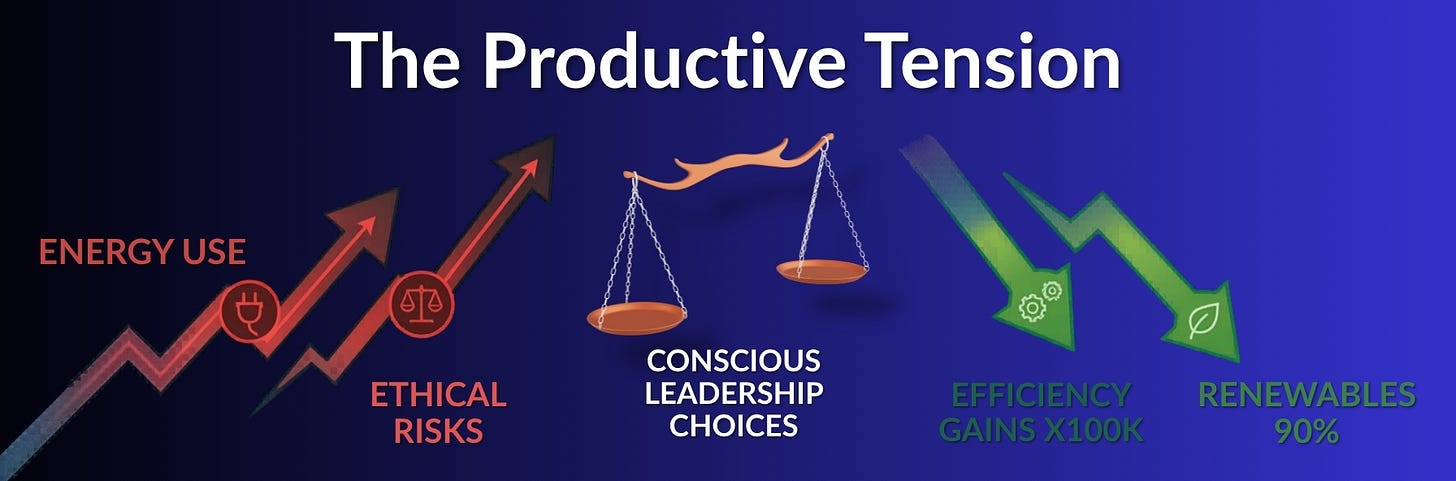

The Journal of Environmental Management’s global assessment ‘The Two Tales of AI’ frames the duality well: in one scenario AI accelerates decarbonisation; in the other, efficiency gains are rapidly consumed by scale and rebound effects. Which story becomes dominant is not determined by the technology. It is determined by choices. That framing resonates with me strongly. In my work with leaders navigating major change programmes, the pattern is consistent: the technology rarely determines the outcome. The decisions made around the technology do.

The Counterbalance: Where Genuine Progress Is Happening

It would be intellectually dishonest to present only the challenges without acknowledging the genuine, evidence-based reasons for optimism, and there are several that deserve serious attention.

Efficiency gains in AI systems have been extraordinary. Nvidia’s research published in late 2025 found that energy efficiency in large language model inference has improved by a factor of 100,000 over the past ten years. That is not a marginal improvement. It means that the AI capabilities available today cost a fraction of what equivalent capabilities would have consumed a decade ago, and the trajectory continues. If this efficiency curve persists, the absolute energy cost of AI’s growth may be significantly lower than projections based on historical consumption patterns suggest.

The energy transition itself is being accelerated, in part, by AI’s own appetite. Renewables accounted for over 90 per cent of new utility-scale generating capacity in 2024, partly because they are faster to deploy and easier to scale, and the pressure from data centre demand is pushing utilities and investors to accelerate clean energy development. AI is already demonstrating real value in grid management: within power networks, AI improves forecasting and fault detection, reducing outages by 30 to 50 per cent and enabling better integration of renewable energy sources. AI-managed lighting, heating, and cooling in buildings could save 300 TWh annually, equivalent to the total electricity usage of Australia and New Zealand combined.

The Cornell roadmap, for all its sobering projections, also identifies a practical path forward: deploying advanced liquid cooling and improved server utilisation could reduce carbon emissions by a further 7 per cent and lower water use by up to 32 per cent at existing data centres. That is achievable with currently available technology and deliberate infrastructure investment, not a distant aspiration.

A study published in March 2026 found that while AI consumes electricity at the scale of a country like Iceland, its overall contribution to global emissions may be far smaller than the headline figures suggest, and that the more localised impacts around specific data centres are the more pressing and tractable concern. That reframing matters: it points the conversation toward targeted, solvable problems rather than undifferentiated alarm.

I find this genuinely encouraging, and not in a naive way. I have been in enough strategic change programmes to know that the problems that kill organisations are rarely the ones nobody saw coming. They are the ones people saw coming but felt too big to address incrementally. AI’s environmental and ethical challenges are large, but they have identifiable entry points, measurable indicators, and growing bodies of practice around mitigation. That is a different kind of problem from an intractable one.

Three Layers of Responsibility

The research points consistently to the same conclusion: the risks are real, the trajectory is not fixed, and the outcomes depend on choices made at multiple levels simultaneously. What follows is not a set of abstract principles but a practical framework, organised by where the levers actually sit.

Layer One: Global AI Infrastructure

The first layer sets the conditions within which everything else operates. AI infrastructure, meaning data centres, energy grids, chip supply chains, and training pipelines, is concentrated in relatively few hands and governed by a patchwork of national and voluntary frameworks that does not match the global scale of the technology.

The regulatory picture is uneven in ways that matter. The EU’s AI Act is the most comprehensive legislative attempt yet to codify requirements around transparency, accountability, and risk management. But regulation is jurisdictionally bounded and AI is not. Without binding international frameworks, countries that establish robust ethical standards risk simply displacing irresponsible AI development to jurisdictions with fewer constraints, rather than raising the global floor. Progress on multilateral coordination has been slow, and that slowness is itself a policy choice with consequences.

The Cornell roadmap and the Oeko Institut research both identify mandatory disclosure of energy and water consumption figures from AI operators as a high-leverage intervention, applying transparency standards already common in other high-impact sectors. Carbon pricing mechanisms that account for AI infrastructure’s footprint, alongside renewable energy procurement requirements that go beyond matching to actual clean operation, are identified across multiple reports as necessary systemic interventions. The OECD’s methodological work on measuring environmental impacts creates the foundation for this; what is lacking is regulatory will to make it compulsory.

The optimistic read at this level is that the direction of travel in several major jurisdictions is broadly correct. The UK’s AI Growth Lab, the EU’s enforcement of the AI Act, and the emergence of independent auditing infrastructure all represent structural improvements that were not in place five years ago. The policy scaffolding is being built. The question is whether it is being built quickly enough.

Layer Two: Organisational Decisions and Deployment

This is where most leaders reading this will find their most direct leverage. Organisations adopting AI are not passive recipients of the technology’s consequences. They are active participants in shaping them. The choices made about which tools to deploy, for what purposes, with what governance structures, and with what accountability to those affected, are consequential choices that aggregate into the overall picture.

The ethical frameworks reviewed across this research, whether IEEE, EU, or OECD, converge on a set of principles that translate directly into practice. Transparency means communicating clearly when AI systems are being used and how they affect decisions. Accountability means establishing clear lines of responsibility for AI-mediated outcomes and ensuring redress mechanisms exist when those outcomes cause harm. Proportionality means matching the capability and opacity of an AI system to the stakes of its application. Human oversight means maintaining genuine human judgement in high-stakes decisions rather than treating AI outputs as final.

I speak to leaders across a range of industries, and the pattern I see most consistently is not malicious intent. It is governance that lags behind adoption. Organisations move fast to implement AI tools because the competitive pressure to do so is real, and the ethical and environmental review structures simply have not kept pace. That is a fixable problem, and the organisations that fix it early build something genuinely durable: the credibility that comes from being able to demonstrate that their AI use is both effective and accountable.

Build ethical review into early stages of AI procurement and development cycles, not as an afterthought audit at the point of deployment. The Stanford HAI report and the USC Annenberg analysis both note that organisations embedding ethical review early produce materially better outcomes than those treating it as a compliance checkbox completed after the fact.

Ask AI vendors directly for energy and water consumption data for the services being purchased. This data exists. Many vendors simply do not publish it unless asked. Factoring it into procurement decisions applies the same supply chain responsibility that organisations increasingly apply to other resource categories.

Include AI energy consumption in existing carbon accounting and sustainability reporting frameworks. This is methodologically feasible today, using the OECD’s measurement guidance as a basis, and it closes the gap between an organisation’s stated sustainability commitments and its actual AI-related footprint.

Invest in AI literacy across leadership teams, not just technical fluency but genuine critical capacity: understanding failure modes, limitations, and the structural contexts in which these systems operate. The AIHub ethics review identifies institutional literacy as increasingly central to ethical deployment, and the responsibility to build it sits with organisations, not individual users.

Smaller, purpose-built models for specific organisational tasks use a fraction of the energy of large generalised systems. Where the use case allows it, choosing the efficient tool rather than the most impressive one is a meaningful and immediately actionable environmental decision.

Layer Three: Individual Personal Responsibility

The third layer is the one most easily underestimated, and it is where this article’s personal opening becomes most relevant. The individual choices made by professionals engaging with AI, about how they use it, how they talk about it, and how critically they engage with its outputs, matter more than is commonly acknowledged.

This is, honestly, where my own journey with this research lands. My discomfort with floundering in anecdotal arguments was not just about personal pride. It was about recognising that the conversations leaders have about AI, the way they frame it for their teams, the questions they do or do not ask, shape organisational culture around the technology. If those conversations are evidence-free, the decisions that follow them will be too.

The most practical starting point is developing genuine AI literacy rather than operational fluency alone. Knowing how to prompt a model is a useful skill. Understanding how training data affects outputs, what the limitations of a given system are, and when a tool is being used outside the domain where it performs reliably is a more important skill. The gap between those two states is largely a matter of deliberate effort.

Choose AI tools from providers who publish credible environmental and ethical accountability data. Where that data is not published, ask for it. That question, asked often enough, becomes a market signal. Be deliberate about when not to use AI. The AIHub review highlights a growing recognition that declining to deploy AI in certain contexts is a legitimate and sometimes correct ethical choice, not a failure to modernise. Building personal judgement about those boundaries is itself a form of literacy.

Bring evidence into team conversations rather than anecdote and assumption. Sharing credible sources, raising inconvenient questions, and being willing to sit with complexity rather than defaulting to comfortable narratives is a contribution any individual can make regardless of their seniority or technical background.

Pay attention to the efficiency of your own AI usage. Running repeated large queries for tasks that a simpler tool could handle has a real, if small, energy cost that accumulates across millions of users. Developing habits of proportionate tool selection is a modest act with aggregate significance.

Quick Wins: Where to Start This Week

The research can feel enormous in scope, but the entry points are practical and immediate. None of the following requires budget approval, a board decision, or a technical background. They are simply the actions of someone choosing to engage with this topic seriously.

For individuals:

Ask your AI tool of choice who made it, what energy it runs on, and whether the provider publishes environmental accountability data. The act of asking matters, even if the answer is incomplete. It builds your own literacy and sends a signal to the market.

Before defaulting to a large, high-powered AI model for a task, spend thirty seconds asking whether a simpler tool would do the job. Proportionate tool selection is one of the most immediate and overlooked ways individuals can reduce their personal AI footprint.

Read one piece of primary research on AI ethics or environmental impact, not a summary, not a social media post, but a full article or institutional report. The gap between what the evidence says and what most people believe it says is significant. Closing that gap personally is the first step to closing it in the rooms you operate in.

For teams and organisations:

Put ‘AI ethics and environmental impact’ on the agenda of your next strategy or leadership team meeting, not as a risk item to be managed but as a genuine conversation about values and governance. The AIHub ethics review from 2026 identifies the absence of structured organisational conversation as one of the primary reasons ethical AI deployment lags behind AI adoption.

Audit your current AI tool stack against two questions: does each tool serve a clear purpose proportionate to its cost, and does the vendor provide any transparency on energy and environmental data? You do not need to act immediately on the answers, but having them changes the quality of your next procurement decision.

Identify one AI use case in your organisation where a smaller, purpose-built model could replace a large generalised one. The energy saving at individual level is modest; the cultural signal of making that choice deliberately is considerable.

For leaders with broader influence:

Raise the question of mandatory environmental disclosure with any AI vendor you have a relationship with. The OECD’s measurement framework already provides the methodology; what is missing is the expectation from buyers that vendors use it.

Support or sponsor AI literacy investment in your organisation that goes beyond tool training. The difference between a workforce that can use AI and one that can think critically about it is the difference between adoption and governance.

The Productive Tension: A Genuinely Optimistic Conclusion

The research surveyed here, spanning environmental impact studies from Cornell, the OECD, and the Oeko Institute, ethical analyses from USC Annenberg and Stanford HAI, policy briefs from T20 India, the civil society report on AI climate claims, and the LSE Grantham Institute’s dual-perspective work, does not resolve into a simple verdict. But the fuller picture, including the evidence on extraordinary efficiency gains, accelerating renewable energy investment, and maturing ethics infrastructure, points somewhere more hopeful than the headlines typically suggest.

The numbers that initially gave me pause, the projections on exponential power and water consumption, are real. But so is this: AI model energy efficiency has improved by a factor of 100,000 over the past decade. Renewables accounted for over 90 per cent of new generating capacity in 2024, partly driven by the urgency that AI’s own energy appetite has created. AI is already reducing grid outages by 30 to 50 per cent and enabling cleaner energy integration at scale. The same technology creating the problem is actively contributing to the solutions, provided the deployment decisions are made consciously.

The sarcastic sign-off from the NotebookLM audio summary deserved its laugh, but it is not the final word. The research, taken in full, suggests that the negative impacts of AI are real, currently underestimated by most organisations, and genuinely mitigable. Not eventually, not hypothetically: now, with currently available tools, frameworks, and decisions. The three layers of responsibility described in this article, global policy, organisational governance, and individual accountability, are all moving. Not fast enough, but in the right direction, and with real momentum building.

The gap between the evidence and the average team meeting conversation about AI remains significant. But it is a gap that closes one conversation at a time: one honest question asked, one piece of primary research read, one procurement decision made with environmental accountability in mind. The scale of the challenge is not a reason for paralysis. It is a reason to start with the quick wins available this week, and to build from there with the seriousness the evidence deserves.

The AI reckoning is real. So is the opportunity to get this right.

If you are new here, I am Lee Whitmore, a leadership coach, author, and host of the LevelUp Leadership podcast. If you want a deeper, practical guide to leading in this landscape, my book ‘Enhanced Leadership’ goes further into the tools, mindsets, and conversations that help you and your organisation adapt. You can find it here: https://mybook.to/EnhancedLeadership

Until next time, keep leading, keep learning, and keep levelling up!

Follow on LinkedIn - Spotify - YouTube - Apple

References

Cornell University - “’Roadmap’ shows environmental impact of AI data center boom” (Nov 2025)

https://news.cornell.edu/stories/2025/11/roadmap-shows-environmental-impact-ai-data-center-boomOeko Institut - “Environmental Impacts of Artificial Intelligence” (Apr 2025)

https://www.oeko.de/fileadmin/oekodoc/Report_KI_ENG.pdfInternational Science Council - “Considerations on environmental impact of AI in science” (Sep 2025)

https://council.science/wp-content/uploads/2025/09/Considerations-on-the-environmental-impact-of-AI-in-science-FINAL.pdfArXiv - “Assessing the Ecological Impact of AI” (Jul 2025)

https://arxiv.org/pdf/2507.21102.pdfOECD - “Measuring environmental impacts of artificial intelligence compute and applications” (Nov 2022)

PDF: https://www.oecd-ilibrary.org/docserver/7ba4ec34-en.pdfT20 India Policy Brief - “Environmental and Ethical Challenges of AI” (Jul 2023)

https://t20ind.org/wp-content/uploads/2023/07/T20_PolicyBrief_TF7_Environmental-Ethical-AI.pdfCivil Society Report - “AI climate benefits overstated” (Feb 2026)

https://dig.watch/updates/ai-climate-benefits-civil-society-reportLSE Grantham Institute - Climate studies (2025)

https://www.lse.ac.uk/granthaminstitute/Journal of Environmental Management - “The Two Tales of AI” (Sep 2025)

https://www.sciencedirect.com/science/article/abs/pii/S0301479725027896Stanford HAI - AI Index Report Chapter 3

https://hai.stanford.edu/assets/files/hai_ai-index-report-2025_chapter3_final.pdfGoogle - Responsible AI Progress Report (Feb 2025)

https://ai.google/static/documents/ai-responsibility-update-published-february-2025.pdfUSC Annenberg - “Ethical Dilemmas of AI”

https://annenberg.usc.edu/research/center-public-relations/usc-annenberg-relevance-report/ethical-dilemmas-aiZendata - “AI Ethics 101”

https://www.zendata.dev/post/ai-ethics-101AIHub - “Top AI Ethics Issues 2025” (Mar 2026)

https://aihub.org/2026/03/04/top-ai-ethics-and-policy-issues-of-2025-and-what-to-expect-in-2026/Nvidia - “AI Energy Innovation”

https://blogs.nvidia.com/blog/ai-energy-innovation-climate-research/Optera Climate - “2026 AI Energy Predictions”

https://opteraclimate.com/2026-predictions-how-ai-will-impact-energy-use-and-climate-work/ScienceDaily - “AI Energy Study” (Mar 2026)

https://www.sciencedaily.com/releases/2026/03/260318033103.htm